Author's Note 1: It is my standard policy to put too much info into guides so that those who are searching for specific problems they come across will find the offending text in their searches. With luck, your "build error" search sent you here.

Author's Note 2: It's not as bad as it looks (I've included lots of output and error messages for easy searching)!

Author's Note 3: I won't be much help for you in diagnosing your errors, but am happy to tweak the text below if something is unclear.

Conventions: I include both the commands you type in your Terminal and some of the output from these commands, the output being where most of the errors appear that I work on in the discussion.

Input is formatted as below:

Text you put in (copy + paste should be fine)

Output is formatted as below:

Text you get out (for checking results and reproducing errors)

1. Introduction

This work began as an attempt to build a CUDA-friendly version of the molecular dynamics package GROMACS (which will come later) but, for reasons stemming from a new local Syracuse Meetup Group (Bitcoin's of New York – Miner's of Syracuse. Consider joining!), the formation of our very own local mining pool (Salt City Miners, miner.saltcityminers.com. Consider joining!), plus a "what the hell" to see if it was an easy build or not, transformed into the CudaMiner-centric compiling post you see here.

NOTE: This will be a 64-bit-centric install but I'll include 32-bit content as I've found the info on other sites.

2. Installing The NVIDIA Drivers (Two Methods, The Easy One Described)

Having run through this process many times in a fresh install of Ubuntu 12.04 LTS (so nothing else is on the machine except 12.04 LTS, its updates, a few extra installs, and the CUDA/CudaMiner codes), I can say that what is below should work without hitch AFTER you install the NVIDIA drivers. Once your NVIDIA card is installed and Ubuntu recognizes it, you've two options.

2A. Install The Drivers From An NVIDIA Download (The Hard Version)

A few websites (and several repostings of the same content) describe the process of installing the NVIDIA drivers the olde-fashioned way, in which you'll see references to "blacklist nouveau," "sudo service lightdm stop," Ctrl+Alt+F1 (to get you to a text-only session), etc. You hopefully don't need to do this much work for your own NVIDIA install, as Ubuntu will do it for you (with only one restart required).

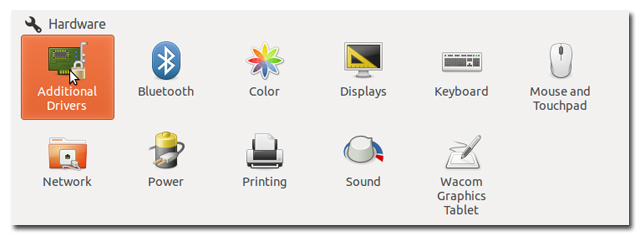

2B. Install The Drivers After The "Restricted Drivers Available" Pop-Up Or Go To System Settings > Available Drivers (The Easy, Teenage New York Version)

I took the easy way out by letting Ubuntu do the dirty work. The result is the installation of the (currently, as of 28 Dec 2013) v. 319 NVIDIA accelerated graphics driver. For my NVIDIA cards (GTX 690 and a GTX 650 Ti, although I assume it's similar for a whole class of NVIDIA cards), you're (currently, check the date again) given the option of v. 304. Don't! I've seen several mentions of CudaMiner (and some of the cuds toolkit) requiring v. 319.

3. Pre-CUDA Toolkit Install

There are a few apt-get's you need to do before installing the CUDA Toolkit (or, at least, the consensus is that these must be done. I've not seen a different list in any posts and I didn't bother to install one-by-one to see which of these might not be needed).

If you perform the most commonly posted apt-get (plus and update and upgrade if you've not done so lately):

user@host:~/$ sudo apt-get update user@host:~/$ sudo apt-get upgrade user@host:~/$ sudo apt-get install freeglut3-dev build-essential libx11-dev libxmu-dev libxi-dev libgl1-mesa-glx libglu1-mesa libglu1-mesa-dev

You'll get the following error from a fresh 12.04 LTS install:

Reading package lists… Done

Building dependency tree

Reading state information… Done

libglu1-mesa is already the newest version.

libglu1-mesa set to manually installed.

Some packages could not be installed. This may mean that you have

requested an impossible situation or if you are using the unstable

distribution that some required packages have not yet been created

or been moved out of Incoming.

The following information may help to resolve the situation:The following packages have unmet dependencies:

libgl1-mesa-glx : Depends: libglapi-mesa (= 8.0.4-0ubuntu0.6)

Recommends: libgl1-mesa-dri (>= 7.2)

E: Unable to correct problems, you have held broken packages.

The solution here is simple. Add libglapi-mesa and libgl1-mesa-dri to your install.

user@host:~/$ sudo apt-get install freeglut3-dev build-essential libx11-dev libxmu-dev libxi-dev libgl1-mesa-glx libglu1-mesa libglu1-mesa-dev libglapi-mesa libgl1-mesa-dri

Doing this will add a bunch of programs and libraries (listed below):

The following extra packages will be installed:

dpkg-dev freeglut3 g++ g++-4.6 libalgorithm-diff-perl libalgorithm-diff-xs-perl

libalgorithm-merge-perl libdpkg-perl libdrm-dev libgl1-mesa-dev libice-dev libkms1 libllvm3.0

libpthread-stubs0 libpthread-stubs0-dev libsm-dev libstdc++6-4.6-dev libtimedate-perl libx11-doc

libxau-dev libxcb1-dev libxdmcp-dev libxext-dev libxmu-headers libxt-dev mesa-common-dev

x11proto-core-dev x11proto-input-dev x11proto-kb-dev x11proto-xext-dev xorg-sgml-doctools

xserver-xorg xserver-xorg-core xserver-xorg-input-evdev xtrans-dev

Suggested packages:

debian-keyring g++-multilib g++-4.6-multilib gcc-4.6-doc libstdc++6-4.6-dbg libglide3

libstdc++6-4.6-doc libxcb-doc xfonts-100dpi xfonts-75dpi

The following packages will be REMOVED:

libgl1-mesa-dri-lts-raring libgl1-mesa-glx-lts-raring libglapi-mesa-lts-raring

libxatracker1-lts-raring x11-xserver-utils-lts-raring xserver-common-lts-raring

xserver-xorg-core-lts-raring xserver-xorg-input-all-lts-raring xserver-xorg-input-evdev-lts-raring

xserver-xorg-input-mouse-lts-raring xserver-xorg-input-synaptics-lts-raring

xserver-xorg-input-vmmouse-lts-raring xserver-xorg-input-wacom-lts-raring xserver-xorg-lts-raring

xserver-xorg-video-all-lts-raring xserver-xorg-video-ati-lts-raring

xserver-xorg-video-cirrus-lts-raring xserver-xorg-video-fbdev-lts-raring

xserver-xorg-video-intel-lts-raring xserver-xorg-video-mach64-lts-raring

xserver-xorg-video-mga-lts-raring xserver-xorg-video-modesetting-lts-raring

xserver-xorg-video-neomagic-lts-raring xserver-xorg-video-nouveau-lts-raring

xserver-xorg-video-openchrome-lts-raring xserver-xorg-video-r128-lts-raring

xserver-xorg-video-radeon-lts-raring xserver-xorg-video-s3-lts-raring

xserver-xorg-video-savage-lts-raring xserver-xorg-video-siliconmotion-lts-raring

xserver-xorg-video-sis-lts-raring xserver-xorg-video-sisusb-lts-raring

xserver-xorg-video-tdfx-lts-raring xserver-xorg-video-trident-lts-raring

xserver-xorg-video-vesa-lts-raring xserver-xorg-video-vmware-lts-raring

The following NEW packages will be installed:

build-essential dpkg-dev freeglut3 freeglut3-dev g++ g++-4.6 libalgorithm-diff-perl

libalgorithm-diff-xs-perl libalgorithm-merge-perl libdpkg-perl libdrm-dev libgl1-mesa-dev

libgl1-mesa-dri libgl1-mesa-glx libglapi-mesa libglu1-mesa-dev libice-dev libkms1 libllvm3.0

libpthread-stubs0 libpthread-stubs0-dev libsm-dev libstdc++6-4.6-dev libtimedate-perl libx11-dev

libx11-doc libxau-dev libxcb1-dev libxdmcp-dev libxext-dev libxi-dev libxmu-dev libxmu-headers

libxt-dev mesa-common-dev x11proto-core-dev x11proto-input-dev x11proto-kb-dev x11proto-xext-dev

xorg-sgml-doctools xserver-xorg xserver-xorg-core xserver-xorg-input-evdev xtrans-dev

And, remarkably, that's it for the pre-install.

4. CUDA Toolkit 5.5(.22) Install

The CUDA Toolkit install starts with its 810 MB download at developer.NVIDIA.com/cuda-downloads.

Obviously, be aware of the 32- and 64-bit options. Also, the .deb doesn't currently download, leaving you to grab the .run file (same difference, I haven't bothered to find out why the .deb doesn't fly yet).

Off to your Terminal and into the Downloads folder:

user@host:~/$ cd Downloads user@host:~/Downloads$ chmod +x cuda_5.5.22_linux_64.run user@host:~/Downloads$ sudo ./cuda_5.5.22_linux_64.run

Which will produce:

Logging to /tmp/cuda_install_14755.log

Using more to view the EULA.

End User License Agreement

————————–

. . .

and cannot be linked to any personally identifiable

information. Personally identifiable information such as your

username or hostname is not collected.————————————————————-

Finally, some input to be had after the scrolling:

Do you accept the previously read EULA? (accept/decline/quit): accept Install NVIDIA Accelerated Graphics Driver for Linux-x86_64 319.37? ((y)es/(n)o/(q)uit): n Install the CUDA 5.5 Toolkit? ((y)es/(n)o/(q)uit): y Enter Toolkit Location [ default is /usr/local/cuda-5.5 ]: Install the CUDA 5.5 Samples? ((y)es/(n)o/(q)uit): y Enter CUDA Samples Location [ default is /home/user/NVIDIA_CUDA-5.5_Samples ]:

NOTE 1: Don't install the NVIDIA Accelerated Graphics Driver!

NOTE 2: Yes, install the Toolkit.

NOTE 3: I will assume this location for all of the below, setting the location in the PATH.

NOTE 4: I installed the samples for testing (and found a few extra things that need installation for them).

NOTE 5: Default is fine. Once built and tested, can be deleted (although the Mandelbrot is a keeper)

Installing the CUDA Toolkit in /usr/local/cuda-5.5 …

Installing the CUDA Samples in /home/user/NVIDIA_CUDA-5.5_Samples …

Copying samples to /home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples now…

Finished copying samples.===========

= Summary =

===========Driver: Not Selected

Toolkit: Installed in /usr/local/cuda-5.5

Samples: Installed in /home/user/NVIDIA_CUDA-5.5_Samples* Please make sure your PATH includes /usr/local/cuda-5.5/bin

* Please make sure your LD_LIBRARY_PATH

* for 32-bit Linux distributions includes /usr/local/cuda-5.5/lib

* for 64-bit Linux distributions includes /usr/local/cuda-5.5/lib64:/lib

* OR

* for 32-bit Linux distributions add /usr/local/cuda-5.5/lib

* for 64-bit Linux distributions add /usr/local/cuda-5.5/lib64 and /lib

* to /etc/ld.so.conf and run ldconfig as root* To uninstall CUDA, remove the CUDA files in /usr/local/cuda-5.5

* Installation CompletePlease see CUDA_Getting_Started_Linux.pdf in /usr/local/cuda-5.5/doc/pdf for detailed information on setting up CUDA.

***WARNING: Incomplete installation! This installation did not install the CUDA Driver. A driver of version at least 319.00 is required for CUDA 5.5 functionality to work.

To install the driver using this installer, run the following command, replacingwith the name of this run file:

sudo.run -silent -driver Logfile is /tmp/cuda_install_14755.log

And ignore the WARNING.

As per the "make sure" above, add the CUDA distro folders to your path and LD_LIBRARY_PATH (I chose not to modify ld.so.conf)

user@host:~/Downloads$ cd user@host:~/$ nano .bashrc

Add the PATH and LD_LIBRARY_PATH as follows:

PATH=$PATH:/usr/local/cuda-5.5/bin

LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/cuda-5.5/lib64:/lib

And then source the .bashrc file.

user@host:~/$ source .bashrc

5. NVIDIA_CUDA-5.5_Samples (And Finishing The Toolkit Install To Build CudaMiner)

The next set of installs and file modifications came from attempting to build the Samples in the NVIDIA_CUDA-5.5_Samples (or NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples depending on how your install did it) library, during which time I think I managed to hit all of the post-Toolkit install modifications needed to make the CudaMiner build problem-free. The OpenMPI install is optional, but I do hate error messages.

5A. Error 1: /usr/bin/ld: cannot find -lcuda

My first make attempt produced the following error:

user@host:~/$ cd NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples$ make

make[1]: Entering directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/0_Simple/asyncAPI'

"/usr/local/cuda-5.5"/bin/nvcc -ccbin g++ -Ihttps://www.somewhereville.com/common/inc -m64 -gencode arch=compute_10,code=sm_10 -gencode arch=compute_20,code=sm_20 -gencode arch=compute_30,code=sm_30 -gencode arch=compute_35,code=\"sm_35,compute_35\" -o asyncAPI.o -c asyncAPI.cu

. . .

"/usr/local/cuda-5.5"/bin/nvcc -ccbin g++ -Ihttps://www.somewhereville.com/common/inc -m64 -o vectorAddDrv.o -c vectorAddDrv.cpp

"/usr/local/cuda-5.5"/bin/nvcc -ccbin g++ -m64 -o vectorAddDrv vectorAddDrv.o -L/usr/lib/NVIDIA-current -lcuda

/usr/bin/ld: cannot find -lcuda

collect2: ld returned 1 exit status

make[1]: *** [vectorAddDrv] Error 1

make[1]: Leaving directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/0_Simple/vectorAddDrv'

make: *** [0_Simple/vectorAddDrv/Makefile.ph_build] Error 2

This is solved by making a symbolic link for libcuda.so out of /usr/lib/NVIDIA-319/ and into /usr/lib/

NOTE: It doesn't matter what directory you do this from. I've left off the NVIDIA_CUDA-5.5_Samples/ yadda yadda below.

user@host:~/$ sudo ln -s /usr/lib/NVIDIA-319/libcuda.so /usr/lib/libcuda.so

If you're working through the build process and hit the error, run a "make clean" before rerunning.

5B. WARNING – No MPI compiler found.

The second build attempt produced the MPI Warning above.

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples$ make

make[1]: Entering directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/0_Simple/asyncAPI'

"/usr/local/cuda-5.5"/bin/nvcc -ccbin g++ -Ihttps://www.somewhereville.com/common/inc -m64 -gencode arch=compute_10,code=sm_10 -gencode arch=compute_20,code=sm_20 -gencode arch=compute_30,code=sm_30 -gencode arch=compute_35,code=\"sm_35,compute_35\" -o asyncAPI.o -c asyncAPI.cu

"/usr/local/cuda-5.5"/bin/nvcc -ccbin g++ -m64 -o asyncAPI asyncAPI.o

. . .

cp simpleCubemapTexture https://www.somewhereville.com/bin/x86_64/linux/release

make[1]: Leaving directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/0_Simple/simpleCubemapTexture'

———————————————————————————————–

WARNING – No MPI compiler found.

———————————————————————————————–

CUDA Sample "simpleMPI" cannot be built without an MPI Compiler.

This will be a dry-run of the Makefile.

For more information on how to set up your environment to build and run this

sample, please refer the CUDA Samples documentation and release notes

———————————————————————————————–

make[1]: Entering directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/0_Simple/simpleMPI'

[@] mpicxx -Ihttps://www.somewhereville.com/common/inc -o simpleMPI.o -c simpleMPI.cpp

. . .

mkdir -p https://www.somewhereville.com/bin/x86_64/linux/release

cp histEqualizationNPP https://www.somewhereville.com/bin/x86_64/linux/release

make[1]: Leaving directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/7_CUDALibraries/histEqualizationNPP'

Finished building CUDA samples

But otherwise finishes successfully.

To get around this warning, install OpenMPI (which is needed for multi-board GROMACS runs anyway. But, again, not needed for CudaMiner). The specific issue is the need for mpicc, which is in libopenmpi-dev (not openmpi-bin or openmpi-common).

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples$ mpicc

The program 'mpicc' can be found in the following packages:

* lam4-dev

* libmpich-mpd1.0-dev

* libmpich-shmem1.0-dev

* libmpich1.0-dev

* libmpich2-dev

* libopenmpi-dev

* libopenmpi1.5-dev

Try: sudo apt-get install

For completeness, I grab all three (and I'm ingnoring the NVIDIA_CUDA-5.5_Samples directory structure below).

user@host:~/$ sudo apt-get install openmpi-bin openmpi-common libopenmpi-dev

Running mpicc will now produce the following (so it's there):

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples$ mpicc

gcc: fatal error: no input files

compilation terminated.

Now run a "make clean" if needed and make. The build should go without problem.

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples$ make

make[1]: Entering directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/0_Simple/asyncAPI'

"/usr/local/cuda-5.5"/bin/nvcc -ccbin g++ -Ihttps://www.somewhereville.com/common/inc -m64 -gencode arch=compute_10,code=sm_10 -gencode arch=compute_20,code=sm_20 -gencode arch=compute_30,code=sm_30 -gencode arch=compute_35,code=\"sm_35,compute_35\" -o asyncAPI.o -c asyncAPI.cu

"/usr/local/cuda-5.5"/bin/nvcc -ccbin g++ -m64 -o asyncAPI asyncAPI.o

mkdir -p https://www.somewhereville.com/bin/x86_64/linux/release

. . .

mkdir -p https://www.somewhereville.com/bin/x86_64/linux/release

cp histEqualizationNPP https://www.somewhereville.com/bin/x86_64/linux/release

make[1]: Leaving directory `/home/user/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/7_CUDALibraries/histEqualizationNPP'

Finished building CUDA samples

5C. Needed post-processing (lib glut, cuda.conf, NVIDIA.conf, and ldconfig)

The next round of problems stemmed from not being able to run the randomFog program in the new ~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/bin/x86_64/linux/release folder. I suspect the steps taken to remedy this also make all future CUDA-specific work easier, so list the issues and clean-up steps below.

Out of the list of build samples, I selected a few that worked without issue and, finally, randomFog that decidedly had issues:

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/$ cd bin/x86_64/linux/release

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/bin/x86_64/linux/release$ ls

alignedTypes HSOpticalFlow simpleCUBLAS

asyncAPI imageDenoising simpleCUDA2GL

bandwidthTest imageSegmentationNPP simpleCUFFT

batchCUBLAS inlinePTX simpleDevLibCUBLAS

bicubicTexture interval simpleGL

bilateralFilter jpegNPP simpleHyperQ

bindlessTexture lineOfSight simpleIPC

binomialOptions Mandelbrot simpleLayeredTexture

BlackScholes marchingCubes simpleMPI

boxFilter matrixMul simpleMultiCopy

boxFilterNPP matrixMulCUBLAS simpleMultiGPU

cdpAdvancedQuicksort matrixMulDrv simpleP2P

cdpBezierTessellation matrixMulDynlinkJIT simplePitchLinearTexture

cdpLUDecomposition matrixMul_kernel64.ptx simplePrintf

cdpQuadtree MC_EstimatePiInlineP simpleSeparateCompilation

cdpSimplePrint MC_EstimatePiInlineQ simpleStreams

cdpSimpleQuicksort MC_EstimatePiP simpleSurfaceWrite

clock MC_EstimatePiQ simpleTemplates

concurrentKernels MC_SingleAsianOptionP simpleTexture

conjugateGradient mergeSort simpleTexture3D

conjugateGradientPrecond MersenneTwisterGP11213 simpleTextureDrv

convolutionFFT2D MonteCarloMultiGPU simpleTexture_kernel64.ptx

convolutionSeparable nbody simpleVoteIntrinsics

convolutionTexture newdelete simpleZeroCopy

cppIntegration oceanFFT smokeParticles

cppOverload particles SobelFilter

cudaOpenMP postProcessGL SobolQRNG

dct8x8 ptxjit sortingNetworks

deviceQuery quasirandomGenerator stereoDisparity

deviceQueryDrv radixSortThrust template

dwtHaar1D randomFog template_runtime

dxtc recursiveGaussian threadFenceReduction

eigenvalues reduction threadMigration

fastWalshTransform scalarProd threadMigration_kernel64.ptx

FDTD3d scan transpose

fluidsGL segmentationTreeThrust vectorAdd

freeImageInteropNPP shfl_scan vectorAddDrv

FunctionPointers simpleAssert vectorAdd_kernel64.ptx

grabcutNPP simpleAtomicIntrinsics volumeFiltering

histEqualizationNPP simpleCallback volumeRender

histogram simpleCubemapTexture

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/bin/x86_64/linux/release$ ./randomFog

And you get the following error:

./randomFog: error while loading shared libraries: libcurand.so.5.5: cannot open shared object file: No such file or directory

I originally thought this error might have something to with libglut based on other install sites I ran across. I therefore took the step of adding the symbolic link from /usr/lib/x86_64-linux-gnu to /usr/lib

user@host:~$ sudo ln -s /usr/lib/x86_64-linux-gnu/libglut.so.3 /usr/lib/libglut.so

That said, same issue:

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/bin/x86_64/linux/release$ ./randomFog

./randomFog: error while loading shared libraries: libcurand.so.5.5: cannot open shared object file: No such file or directory

I then found references to adding a cuda.conf file to /etc/ld.so.conf.d – and so did that (doesn't help but it came up enough that I suspect it doesn't hurt either).

user@host:~$ sudo nano /etc/ld.so.conf.d/cuda.conf

This file should contain the following:

/usr/local/cuda-5.5/lib64

/usr/local/cuda-5.5/lib

Which also didn't help.

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/bin/x86_64/linux/release$ ./randomFog

./randomFog: error while loading shared libraries: libcurand.so.5.5: cannot open shared object file: No such file or directory

To find the location (or presence) of libcurand, ldconfig -v

user@host:~/$ ldconfig -v

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/bin/x86_64/linux/release$ ldconfig -v

/sbin/ldconfig.real: Path `/lib/x86_64-linux-gnu' given more than once

/sbin/ldconfig.real: Path `/usr/lib/x86_64-linux-gnu' given more than once

/usr/local/cuda-5.5/lib64:

libcuinj64.so.5.5 -> libcuinj64.so.5.5.22

libcufft.so.5.5 -> libcufft.so.5.5.22

libcurand.so.5.5 -> libcurand.so.5.5.22

libcusparse.so.5.5 -> libcusparse.so.5.5.22

. . .

libnvToolsExt.so.1 -> libnvToolsExt.so.1.0.0

/usr/local/cuda-5.5/lib:

libcufft.so.5.5 -> libcufft.so.5.5.22

libcurand.so.5.5 -> libcurand.so.5.5.22

libcusparse.so.5.5 -> libcusparse.so.5.5.22

. . .

/usr/lib/NVIDIA-319/tls: (hwcap: 0x8000000000000000)

libNVIDIA-tls.so.319.32 -> libNVIDIA-tls.so.319.32

/usr/lib32/NVIDIA-319/tls: (hwcap: 0x8000000000000000)

libNVIDIA-tls.so.319.32 -> libNVIDIA-tls.so.319.32

/sbin/ldconfig.real: Can't create temporary cache file /etc/ld.so.cache~: Permission denied

Present twice. Instead of risking making multiple symbolic links as I walked through the dependency gauntlet, I stumbled across another reference in the form of a new /etc/ld.so.conf.d/NVIDIA.conf that contains the same content as cuda.conf (so one may not be needed, but I didn't bother to backtrack to see. Happy to change the page if someone says otherwise).

user@host:~/$ sudo nano /etc/ld.so.conf.d/NVIDIA.conf

/usr/local/cuda-5.5/lib64

/usr/local/cuda-5.5/lib

Then run ldconfig.

user@host:~/$ sudo ldconfig

With that, randomFog works just fine (and you can assume that a problem in one is a problem in several. Having not taken the full symbolic link route in favor of adding to /etc/ld.so.conf.d, I'm assuming I hit most of the potential errors for the other programs.

user@host:~/NVIDIA_CUDA-5.5_Samples/NVIDIA_CUDA-5.5_Samples/bin/x86_64/linux/release$ ./randomFog

Random Fog

==========CURAND initialized

Random number visualization

6. Build CudaMiner

The good news is that there are only a few more steps. The bad news is that any errors you come across in your attempt to build CudaMiner that relate to NOT having done the above are (likely) not represented here, so hopefully your search was sufficiently vague.

Download CudaMiner-master.zip from Christian Buchner's github account. Extracting CudaMiner-master.zip (with unzip, not gunzip. Damn Windows users) and running configure produces only one obvious error.

user@host:~/WHEREVER_YOU_ARE/$ cd user@host:~/$ cd Downloads user@host:~/Downloads$ unzip CudaMiner-master.zip user@host:~/Downloads$ cd CudaMiner-master/ user@host:~/Downloads/CudaMiner-master$ chmod a+wrx configure user@host:~/Downloads/CudaMiner-master$ ./configure

checking build system type… x86_64-unknown-linux-gnu

checking host system type… x86_64-unknown-linux-gnu

checking target system type… x86_64-unknown-linux-gnu

checking for a BSD-compatible install… /usr/bin/install -c

. . .

checking for gawk… (cached) mawk

checking for curl-config… no

checking whether libcurl is usable… no

configure: error: Missing required libcurl >= 7.15.2

This error is remedied by installing libcurl4-gnutls-dev.

user@host:~/Downloads/CudaMiner-master$ sudo apt-get install libcurl4-gnutls-dev

Which adds and modifies the following from my clean 12.04 LTS install and update

The following packages were automatically installed and are no longer required:

gir1.2-ubuntuoneui-3.0 libxcb-dri2-0 libxrandr-ltsr2 libubuntuoneui-3.0-1 libxvmc1 thunderbird-globalmenu

libllvm3.2

Use 'apt-get autoremove' to remove them.

The following extra packages will be installed:

comerr-dev krb5-multidev libgcrypt11-dev libgnutls-dev libgnutls-openssl27 libgnutlsxx27 libgpg-error-dev

libgssrpc4 libidn11-dev libkadm5clnt-mit8 libkadm5srv-mit8 libkdb5-6 libkrb5-dev libldap2-dev

libp11-kit-dev librtmp-dev libtasn1-3-dev zlib1g-dev

Suggested packages:

krb5-doc libcurl3-dbg libgcrypt11-doc gnutls-doc gnutls-bin krb5-user

The following NEW packages will be installed:

comerr-dev krb5-multidev libcurl4-gnutls-dev libgcrypt11-dev libgnutls-dev libgnutls-openssl27

libgnutlsxx27 libgpg-error-dev libgssrpc4 libidn11-dev libkadm5clnt-mit8 libkadm5srv-mit8 libkdb5-6

libkrb5-dev libldap2-dev libp11-kit-dev librtmp-dev libtasn1-3-dev zlib1g-dev

After a make clean, configure and make for CudaMiner went without problem.

user@host:~/Downloads/CudaMiner-master$ make clean user@host:~/Downloads/CudaMiner-master$ ./configure

checking build system type… x86_64-unknown-linux-gnu

checking host system type… x86_64-unknown-linux-gnu

checking target system type… x86_64-unknown-linux-gnu

checking for a BSD-compatible install… /usr/bin/install -c

checking whether build environment is sane… yes

checking for a thread-safe mkdir -p… /bin/mkdir -p

. . .

configure: creating ./config.status

config.status: creating Makefile

config.status: creating compat/Makefile

config.status: creating compat/jansson/Makefile

config.status: creating cpuminer-config.h

config.status: cpuminer-config.h is unchanged

config.status: executing depfiles commands

user@host:~/Downloads/CudaMiner-master$ make

make all-recursive

make[1]: Entering directory `/home/user/Downloads/CudaMiner-master'

Making all in compat

make[2]: Entering directory `/home/user/Downloads/CudaMiner-master/compat'

Making all in jansson

make[3]: Entering directory `/home/user/Downloads/CudaMiner-master/compat/jansson'

. . .

./spinlock_kernel.cu(387): Warning: Cannot tell what pointer points to, assuming global memory space

./spinlock_kernel.cu(387): Warning: Cannot tell what pointer points to, assuming global memory space

./spinlock_kernel.cu(387): Warning: Cannot tell what pointer points to, assuming global memory space

. . .

nvcc -g -O2 -Xptxas "-abi=no -v" -arch=compute_10 –maxrregcount=64 –ptxas-options=-v -I./compat/jansson -o legacy_kernel.o -c legacy_kernel.cu

./legacy_kernel.cu(310): Warning: Cannot tell what pointer points to, assuming global memory space

./legacy_kernel.cu(310): Warning: Cannot tell what pointer points to, assuming global memory space

./legacy_kernel.cu(310): Warning: Cannot tell what pointer points to, assuming global memory space

. . .

g++ -g -O2 -pthread -L/usr/local/cuda/lib64 -o cudaminer cudaminer-cpu-miner.o cudaminer-util.o cudaminer-sha2.o cudaminer-scrypt.o salsa_kernel.o spinlock_kernel.o legacy_kernel.o fermi_kernel.o kepler_kernel.o test_kernel.o titan_kernel.o -L/usr/lib/x86_64-linux-gnu -lcurl -Wl,-Bsymbolic-functions -Wl,-z,relro compat/jansson/libjansson.a -lpthread -lcudart -fopenmp

make[2]: Leaving directory `/home/user/Downloads/CudaMiner-master'

make[1]: Leaving directory `/home/user/Downloads/CudaMiner-master'

A few warnings (well, several hundred of the same warnings) appeared during the build process (but don't affect the program operation. Just pointing them out above).

With luck, you should be able to run a benchmark calculation immediately.

user@host:~/Downloads/CudaMiner-master$ ./cudaminer -d 0 -i 0 --benchmark

*** CudaMiner for NVIDIA GPUs by Christian Buchner ***

This is version 2013-12-18 (beta)

based on pooler-cpuminer 2.3.2 (c) 2010 Jeff Garzik, 2012 pooler

Cuda additions Copyright 2013 Christian Buchner

My donation address: LKS1WDKGED647msBQfLBHV3Ls8sveGncnm[2013-12-25 00:05:38] 1 miner threads started, using 'scrypt' algorithm.

[2013-12-25 00:05:58] GPU #0: GeForce GTX 690 with compute capability 3.0

[2013-12-25 00:05:58] GPU #0: the 'K' kernel requires single memory allocation

[2013-12-25 00:05:58] GPU #0: interactive: 0, tex-cache: 0 , single-alloc: 1

[2013-12-25 00:05:58] GPU #0: Performing auto-tuning (Patience…)

[2013-12-25 00:05:58] GPU #0: maximum warps: 447

[2013-12-25 00:07:40] GPU #0: 288.38 khash/s with configuration K27x4

[2013-12-25 00:07:40] GPU #0: using launch configuration K27x4

[2013-12-25 00:07:40] GPU #0: GeForce GTX 690, 6912 hashes, 0.06 khash/s

[2013-12-25 00:07:40] Total: 0.06 khash/s

[2013-12-25 00:07:40] GPU #0: GeForce GTX 690, 3456 hashes, 141.56 khash/s

[2013-12-25 00:07:40] Total: 141.56 khash/s

[2013-12-25 00:07:43] GPU #0: GeForce GTX 690, 708480 hashes, 251.11 khash/s

[2013-12-25 00:07:43] Total: 251.11 khash/s

[2013-12-25 00:07:48] GPU #0: GeForce GTX 690, 1257984 hashes, 251.19 khash/s

[2013-12-25 00:07:48] Total: 251.19 khash/s

. . .

Then spend the rest of the week optimizing parameters for your particular card and mining proclivity:

user@host:~/Downloads/CudaMiner-master$ ./cudaminer -h

*** CudaMiner for NVIDIA GPUs by Christian Buchner ***

This is version 2013-12-18 (beta)

based on pooler-cpuminer 2.3.2 (c) 2010 Jeff Garzik, 2012 pooler

Cuda additions Copyright 2013 Christian Buchner

My donation address: LKS1WDKGED647msBQfLBHV3Ls8sveGncnm

Usage: cudaminer [OPTIONS]

Options:

-a, --algo=ALGO specify the algorithm to use

scrypt scrypt(1024, 1, 1) (default)

sha256d SHA-256d

-o, --url=URL URL of mining server (default: http://127.0.0.1:9332/)

-O, --userpass=U:P username:password pair for mining server

-u, --user=USERNAME username for mining server

-p, --pass=PASSWORD password for mining server

--cert=FILE certificate for mining server using SSL

-x, --proxy=[PROTOCOL://]HOST[:PORT] connect through a proxy

-t, --threads=N number of miner threads (default: number of processors)

-r, --retries=N number of times to retry if a network call fails

(default: retry indefinitely)

-R, --retry-pause=N time to pause between retries, in seconds (default: 30)

-T, --timeout=N network timeout, in seconds (default: 270)

-s, --scantime=N upper bound on time spent scanning current work when

long polling is unavailable, in seconds (default: 5)

--no-longpoll disable X-Long-Polling support

--no-stratum disable X-Stratum support

-q, --quiet disable per-thread hashmeter output

-D, --debug enable debug output

-P, --protocol-dump verbose dump of protocol-level activities

--no-autotune disable auto-tuning of kernel launch parameters

-d, --devices takes a comma separated list of CUDA devices to use.

This implies the -t option with the threads set to the

number of devices.

-l, --launch-config gives the launch configuration for each kernel

in a comma separated list, one per device.

-i, --interactive comma separated list of flags (0/1) specifying

which of the CUDA device you need to run at inter-

active frame rates (because it drives a display).

-C, --texture-cache comma separated list of flags (0/1) specifying

which of the CUDA devices shall use the texture

cache for mining. Kepler devices will profit.

-m, --single-memory comma separated list of flags (0/1) specifying

which of the CUDA devices shall allocate their

scrypt scratchbuffers in a single memory block.

-H, --hash-parallel 1 to enable parallel SHA256 hashing on the CPU. May

use more CPU overall, but distributes hashing load

neatly across all CPU cores. 0 is now the default

which assigns one static CPU core to each GPU.

-S, --syslog use system log for output messages

-B, --background run the miner in the background

--benchmark run in offline benchmark mode

-c, --config=FILE load a JSON-format configuration file

-V, --version display version information and exit

-h, --help display this help text and exit

I've only had a few problems with CudaMiner to date. The most annoying problem has been the inability to run tests to optimize card performance without having to put the machine to sleep and wake it back up again (better than a full restart). CudaMiner will, without this, simply hang on a script line:

[2013-12-25 00:49:08] 1 miner threads started, using 'scrypt' algorithm.

The sleep + wake does the trick, although I'd love to find out how to not have this happen.

The second annoying problem was:

". . . result does not validate on CPU (i=NNNN, s=0)!

This error is due to your "K16x16" configuration (the most prominent one I've found in google searches, so placed here to help others find it. Your values may vary) being too much for the card (so vary them down a spell until you don't get there error). There's a wealth of proper card settings available on the litecoin hardware comparison site, so I direct you there:

litecoin.info/Mining_hardware_comparison

7. And Finally. . .

By all accounts, CudaMiner is a much faster mining tool for NVIDIA owners. To that end, please note that Christian Buchner has made your life much easier (and your virtual wallet hopefully a little fuller). As mentioned above, his donation address is:

LKS1WDKGED647msBQfLBHV3Ls8sveGncnm

Do consider showing him some love.

This post was made in the interest of helping others get their mining going. If this guide helped and you score blocks early, my wallet's always open as well (can't blame someone for trying).

Litecoin: LTmicpwpGgrZiyiJmMUdyqq4CG8CqiBqrm

Dogecoin: DBwXMoQ4scAqZfYUJgc3SYqTED7eywSHdB

The timing for getting the guide up is based on a new mining operation here in Syracuse, NY in the form of Salt City Miners, currently the Cloud City of mining operations (also appropriate for the weather conditions). Parties interested in adding their power to the fold are more than welcome to sign up at miner.saltcityminers.com/.

And don't forget the Meetup group: Syracuse Meetup Group – Bitcoin's of New York – Miner's of Syracuse